MCP Makes Product Ops AI-First

Last week was a huge deal for AI power-users in the Product Ops field. Pendo published their Autumn 2025 release and it was full of big changes, including:

- Pendo’s own Agent Mode: unifying their previous “junior agents” into a single, cohesive UX

- Agent Analytics: Pendo can now track “rage prompts” – which, as more apps go AI-first, are becoming just as relevant to knowing user sentiment as the classic “rage clicks”

- Updates to Pendo Predict: which predicts when customers are likely to churn – we recently wrote about that here.

But this week, we are focused on what we think is the most game-changing update of them all: the launch of Pendo’s external MCP (Model Context Protocol) server, especially impactful since it came just two days after OpenAI launched their new Apps SDK. This AI double-whammy will massively impact how people access and utilize product data, and it’s a first step into making ProdOps an “AI-first” discipline. But also, MCP is a very new concept – what is it and why is it important?

Welcome to Beyond The Click by Balboa Solutions. In today's issue we're dissecting MCP for Product Ops, including:

- How we got here: context & capability

- A crash course on MCP

- How the Pendo MCP changes ProdOps

- Digging deeper: your own MCP future

Let's dive in.

How we got here: context & capability 🎢

To understand the significance of MCP for Product Ops, it helps to look at the rapid journey we’ve been on with AI, which has made a lot of progress since GPT 3.5 took the world by storm in 2022. Using ChatGPT as our example, this (abbreviated) timeline shows just how far we’ve come:

- Nov 2022: ChatGPT launches, its only context being its training data (Sep ’21 cutoff) and whatever text you put in the prompt.

- Mar 2023: File uploads and analysis enabled, expanding context and output.

- May 2023: API-based function calling becomes ChatGPT’s first direct interaction point with outside resources.

- Oct 2023: "Browse with Bing" gives ChatGPT live web access, meaning it no longer relies purely on the training data to answer questions.

- May 2024: Multi-modal inputs (text/image/audio) accepted via GPT4-o, moving the platform beyond the simple text modality.

- Mar 2025: OpenAI adopts the Model Context Protocol.

- Jun 2025: “Connectors” become ChatGPT’s first native 3P app interaction feature, giving it live, read-only access to Google Workspace, Outlook, etc.

- Jul 2025: Agent Mode can directly access and edit any web app you can get it logged into, just like a human user would – no need to set up connections.

- Oct 2025: Apps SDK enables embedding of rich elements (maps, charts, etc. – not just text) from 3P apps directly in ChatGPT.

This progress can broadly be bucketed into context (what AI can tell you) and capability (what AI can do for you). The former relates to the classic “generative” use cases, while the latter relates to the more recent “agentic” use cases. Many items on this timeline impacted both.

MCP is the latest development in this timeline, and it stands to be one of the biggest (maybe on par with live web access!). So what is it and how does it work? 👇

A crash course on MCP 🚧

Model Context Protocol (our definition): a way to make your AI model more capable and better informed by accessing other systems

- Model: AI models, e.g. LLMs

- Context: what data and tools the model has access to when trying to answer your prompt

- Protocol: a set of rules for how data is formatted, transmitted, and received between 2 parties (e.g. HTTP for Internet, Bluetooth for wireless, OAuth for security, and SWIFT for banking)

Launched by Anthropic in late 2024, adopted by OpenAI in early 2025, and usable with open-source models (e.g. Llama, Mistral), it remains to be seen if the other major AI platforms (e.g. Gemini) will adopt MCP, but the momentum is clear.

How it works

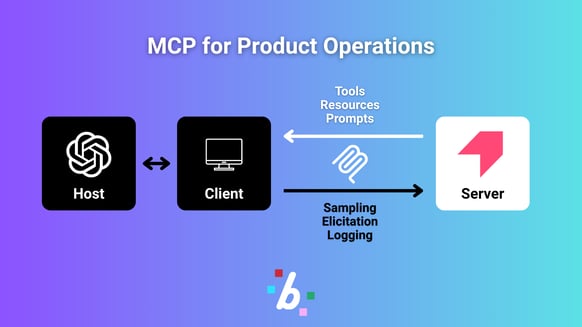

First, you’ve got the MCP Host, which is an AI app. Then, you’ve got the MCP Server, which is an app, program, or data source that the Host references for context. Servers can be local (e.g. the filesystem on your device) or remote (e.g. a cloud-based SaaS app), and the Host connects with Servers by spinning up a unique MCP Client for each one. To illustrate, we’ll use ChatGPT as our Host and Pendo as our Server here.

MCP defines the schema and semantics by which ChatGPT and Pendo communicate. So, what exactly are they sharing with each other?

Server primitives (Pendo provides…)

- Tools: executable functions, like an API call or a database query

- Resources: data sources (the “context”)

- Prompts: templates for the LLM that help structure the interaction

Client primitives (ChatGPT provides…)

- Sampling: lets the server request a language model completion (e.g. “here’s a list of options, recommend the best one”)

- Elicitation: lets the server request additional info or confirmation from the AI end user

- Logging: lets the server send log messages to the AI app (for debugging/monitoring)

From these primitives, we can see two distinct use cases of “tell” and “do.” The second requires a read/write MCP server, while the first can be done with a read-only MCP server.

Why is this important?

MCP matters because it is a one-to-many system. Once you get your MCP server live, your product is now connectable with every AI app on the protocol. Today, this is most relevant for the big chatbots like ChatGPT and Claude. But considering that 1.) very soon, ALL apps will be AI apps, and 2.) MCP is quickly approaching HTTP-level industry standardization, the future interoperability you’d get launching an MCP server today is staggering.

So now that we understand MCP, why did Pendo take the plunge? 👇

How the Pendo MCP changes ProdOps 💡

In short, Pendo on MCP enables the Product org to achieve one of its most strategic goals: drive data-driven collaboration across the whole business. Leaders across product, customer success, marketing, executive, and more all need access to the product analytics data to make product-led decisions. But this is a gap in most enterprises, since getting the whole business onboarded in Pendo isn’t feasible. And even if it were, adopting a new tool is hard for humans, especially busy ones. What you need is a UI that your whole business already uses weekly, that can also pull specific product insights, self-serve, via a natural language prompt.

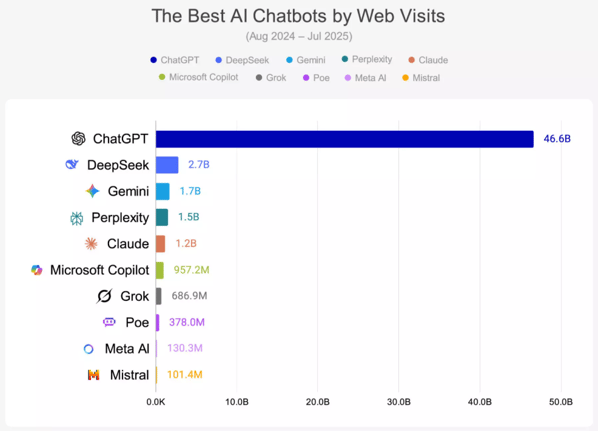

With Pendo’s MCP launch, that UI is now ChatGPT. Or Claude. Or Perplexity. Or whatever other MCP-enabled AI chatbot your people are already used to. And because of the new Apps SDK launch, the ChatGPT users can even get those insights in the form of a rich element, like a graph or a table, served straight from Pendo. All without leaving the “universal chatbot” interface we’ve all become very accustomed to.

Source: OneLittleWeb, "The AI Big Bang Study 2025"

It’s important to note that the Pendo MCP server is currently read-only, so its use cases are all in the “tell” category. That means you can’t use ChatGPT to deploy a guide, but you can use it to compare the performance of your last 5 guides. To actually make changes, ChatGPT will prompt you to log into the Pendo UI and work there. Many software companies (e.g. HubSpot) are starting their MCP servers as read-only at this early stage, but we’re predicting they’ll incorporate write functionality as the tech matures.

The real value of Pendo on MCP is in the “one-to-many” aspect we mentioned earlier. Imagine a business leader running a ChatGPT prompt that aggregates data from your Pendo instance, your CRM, your HRIS, and more. This sort of cohesive workflow makes your product data very useful and actionable to all teams, and it makes ProdOps that much more strategic in the organization.

But as cool as that is, the impact MCP will eventually have on ProdOps goes much deeper. 👇

Digging deeper: your own MCP future ⛏️

Every reason we just gave for why an MCP server is great for Pendo also applies to your own product. It doesn’t matter what vertical you’re in, be it marketing automation, financial forecasting, or dance studio management – your product can be extended into the AI chatbots (and maybe it already has!).

Under this new paradigm, product usage analytics will fundamentally change. Traditional metrics like page views and feature clicks may become less significant. In-app user behavior may skew toward one use case, because they’re doing the others entirely through ChatGPT. Some users may stop logging into your app at all!

Product Ops teams have to adapt to this new environment and measure the success of a product through its AI interactions, not just its user interactions. This frontier is so new and rapidly changing that we’ll refrain from predictions (for now). But if anything is clear, it’s that, thanks to MCP, the age of AI interoperability is here, and the Prduct org has got to adapt.

Did a friend forward you this article? Subscribe to Beyond The Click and get the latest on Pendo and Product Ops straight to your inbox every other Thursday.⚡